multi-agent round table platform

proof of concept for decision-support platform based in a round table discussion (work in progress)

TL;DR

Roundwise is a multi-agent decision-support platform where specialized AI agents debate and collaborate under structured rules to help users make better decisions.

The system is designed to tackle complex, real-world decision-making problems by simulating a Round Table of expert advisors, enabling structured multi-perspective dialogue and context-building.

It is meant to provide a transparent, auditable environment where users can follow the reasoning process, interact with agents, and review results through a dedicated Dashboard.

Key Research Areas

Before starting development, research is needed in areas such as:

Natural Language Interaction & Semantic Understanding:

- semantic requirements mapping: to translate potentially weakly-stated user problems into structured formats for agents to work with

- topic classification & taxonomy design: to organize discussion content effectively and on the run

- conversation-stem tracing & provenance graphs: to track and visualize the flow of reasoning and evidence throughout the discussion

Multi-Agent Systems & Collaborative Decision-Making:

- multi-agent coordination: to study and design effective collaboration protocols among AI agents (e.g., communication patterns, role assignments, etc.)

- turn orchestration: to manage the extent and flow of agent contributions

- argumentation theory: to structure debates and evaluate competing claims

- incentives & aggregation: to align agent motivations and fairly combine their inputs (e.g. social choice mechanisms, voting systems, etc.)

Human–AI Collaboration & Interaction Design:

- interface design for collaborative reasoning: to create user-friendly tools for monitoring and interacting with the system

- hybrid human-machine decision systems: to integrate human oversight with AI collaboration for improved outcomes

Governance, Evaluation & Assurance:

- evaluation metrics: to assess the quality of decisions, coherence, consistency, and reproducibility of multi-agent reasoning

- transparency & auditability: to ensure the decision-making process is interpretable and accountable. auditability, privacy, and security considerations.

— Malory, Thomas. Le Morte d’Arthur. Book XX, Chapter XVI

Introduction

Roundwise (tentative project name) is a collaborative decision-support platform where various multi-model AI agents, each with unique role and focus, debate and cooperate under a structured set of rules to help the user reach better, more transparent decisions.

Why Roundwise?

Let’s use an analogy to introduce Roundwise.

Imagine you are a King who wants to solve a problem —say, to negotiate peace among distant lands.

- Your kingdom is vast, inhabited by many kinds of beings, for example: mages, elves, and merfolk.

- Each knows their own domain deeply.

- You, on the other hand, don’t understand their world well enough to decide alone.

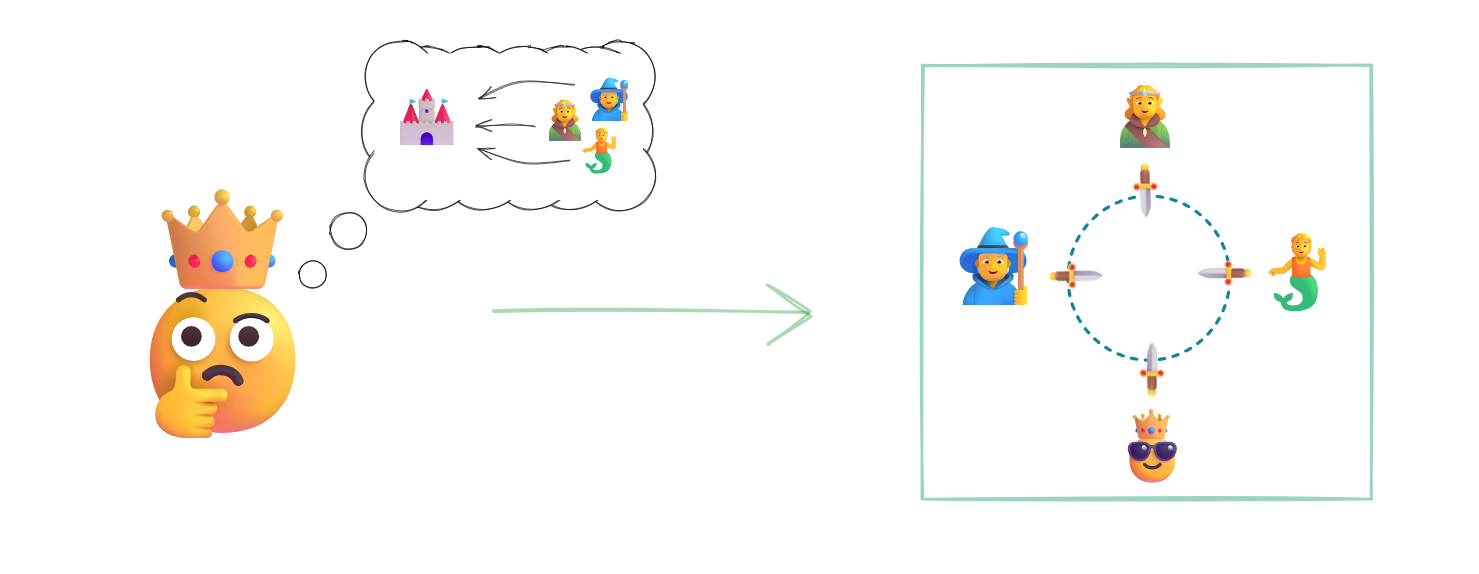

So, instead of relying on a single advisor, the King convenes a Round Table: a forum where the most capable experts discuss, challenge, and refine one another’s ideas until the best solution emerges.

Roundwise works the same way:

- You define your problem.

- A team of specialized AI agents is spawned to collaborate by taking part in a structured multi-perspective dialogue.

- Throughout the session, the user can interact with the agents via a Round Table Dashboard.

Roundwise provides you with access to diverse perspectives, structured reasoning, and transparent decision-making support.

In a nutshell, Roundwise hopes to bring the power of collaborative intelligence to real-world decision-making problems.

You, The King!

As the King, your goal is to solve complex problems in your “kingdom” (organization, research, or project). You want insight that is grounded, balanced, and efficient.

You could try several approaches:

- Think alone. You may miss critical factors or domain knowledge.

- Consult real experts. Effective, but expensive and time-consuming.

- Ask a single LLM. Useful if you can prompt well and validate results, but risky: one model might hallucinate or overlook key perspectives.

These options often fail to capture the diversity of reasoning needed for real-world problems.

That’s why the King needs a Round Table.

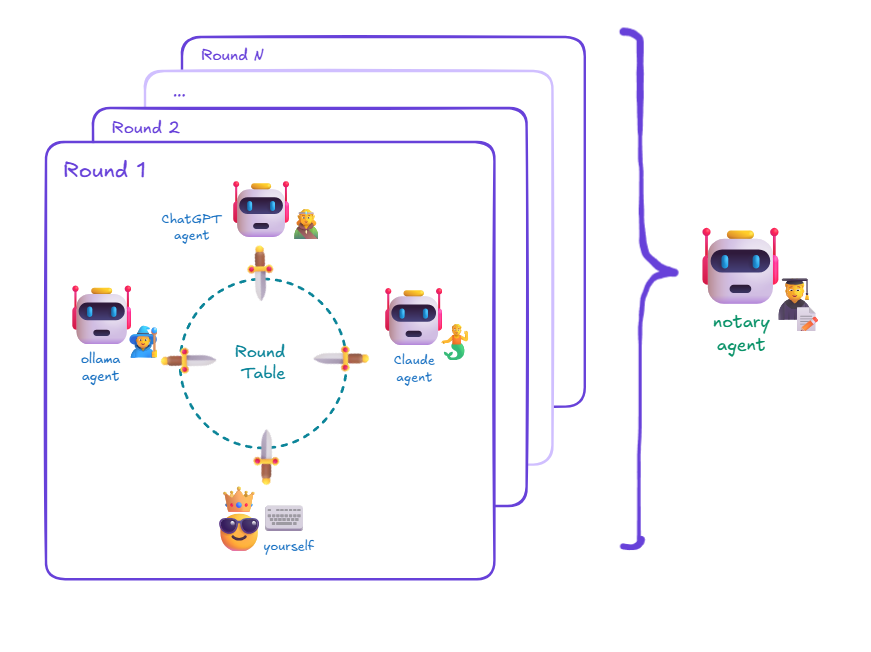

Round Table

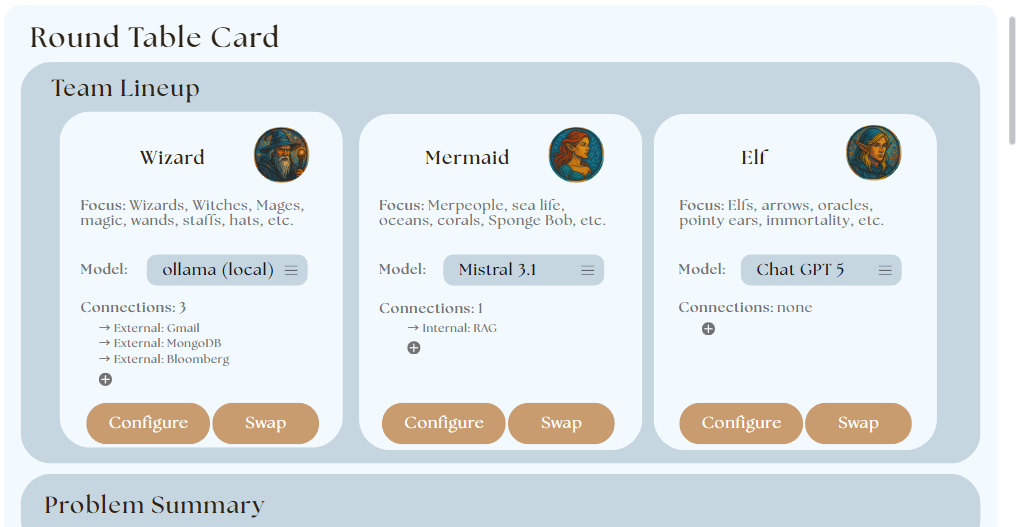

The Round Table is the central coordination mechanism of Roundwise. It’s where AI agents exchange ideas through structured rounds of discussion, guided by the rules engine. Each round refines the collective reasoning, converging on higher-quality, multi-perspective solutions.

The Round Table embodies collaborative intelligence: agents debate, challenge, and vote according to the scenario’s context.

Gatekeeper

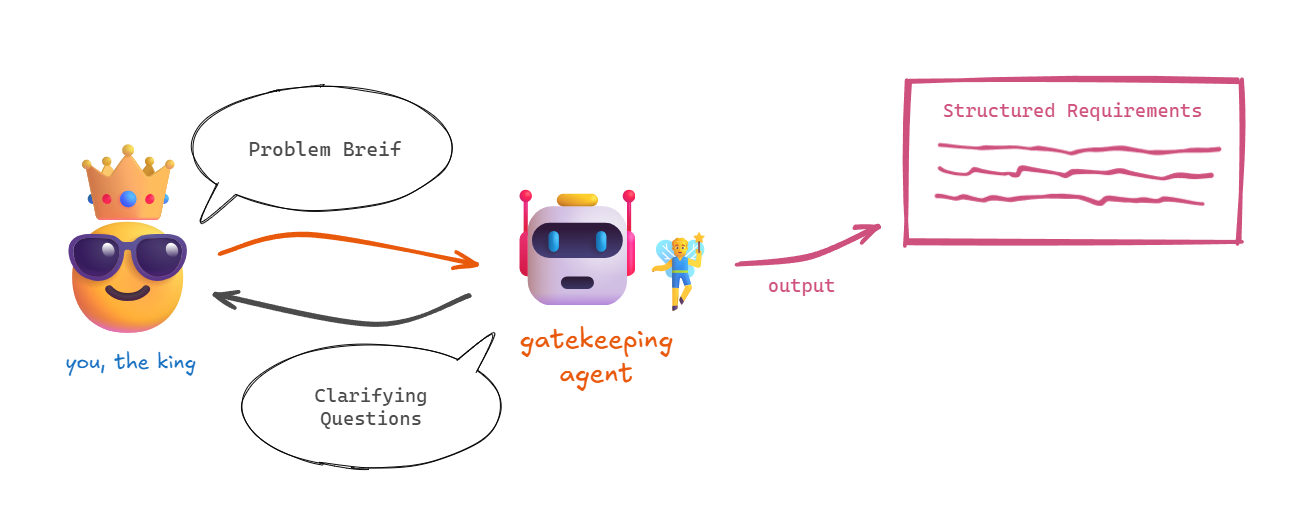

The Gatekeeping Agent is the first entity you meet when starting a new project, which acts as a facilitator between the King and the rest of the system.

The first task of the Gatekeeper is to understand your problem statement. If information is missing, it asks clarifying questions to ensure sufficient context.

Then, it identifies the most relevant expert roles for the task (e.g., Mage, Elf, Mermaid—each representing an LLM or model specialized in a domain). Normally, 2-4 agents are selected to ensure diversity without overwhelming complexity.

Finally, it waits for your confirmation of the proposed agent lineup and your validation of the whole setup.

Once approved, the Gatekeeper instantiates the team: each agent receives a role, a system prompt, and the user’s problem context.

In essence, the Gatekeeper transforms an open-ended idea into a well-defined, multi-agent session.

Expert Agents

Expert Agents are autonomous LLM-based participants with specific roles and knowledge domains. Each agent:

- Thinks and reasons within its defined expertise.

- Reads all messages from the communication channel (user, other agents).

- Participates in multiple comment rounds, contributing unique insights and critiques.

- Votes or expresses confidence on emerging solutions.

Their interaction is governed by the Round Table Rules Engine, ensuring that each round proceeds in an organized manner (e.g., discussion, rebuttal, voting, summarization).

Agents can come from different models (e.g., ChatGPT, Claude, Ollama) to combine diverse reasoning styles and knowledge sources.

Notary Agent

The Notary Agent observes every interaction at the table.

It specializes in:

- Summarizing discussion threads into structured, readable outputs.

- Monitoring conversation dynamics (e.g., detecting loops, low-quality exchanges, or convergence).

- Triggering events in the rules engine—like early stopping when consensus is reached.

- Sending consolidated summaries and metadata to the Dashboard for user review.

It acts as the official record keeper of the Round Table.

Rules-Engine

The Round Table Rules Engine governs the logic of every session.

It orchestrates:

- The transition between phases (e.g. setup → discussion → voting → summary).

- The timing and flow of turns within each round.

- The events triggered by the Notary or Gatekeeper (e.g., agent replacement, early stop, session end).

- The consistency and reproducibility of the debate process.

This ensures that every project follows a transparent, repeatable reasoning protocol—avoiding chaos or overtalk among agents.

Dashboard

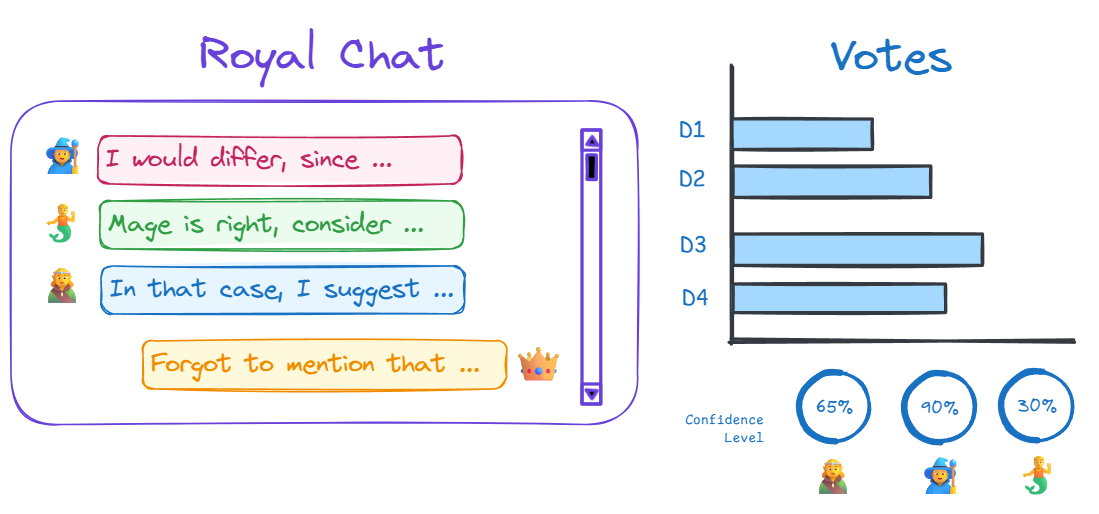

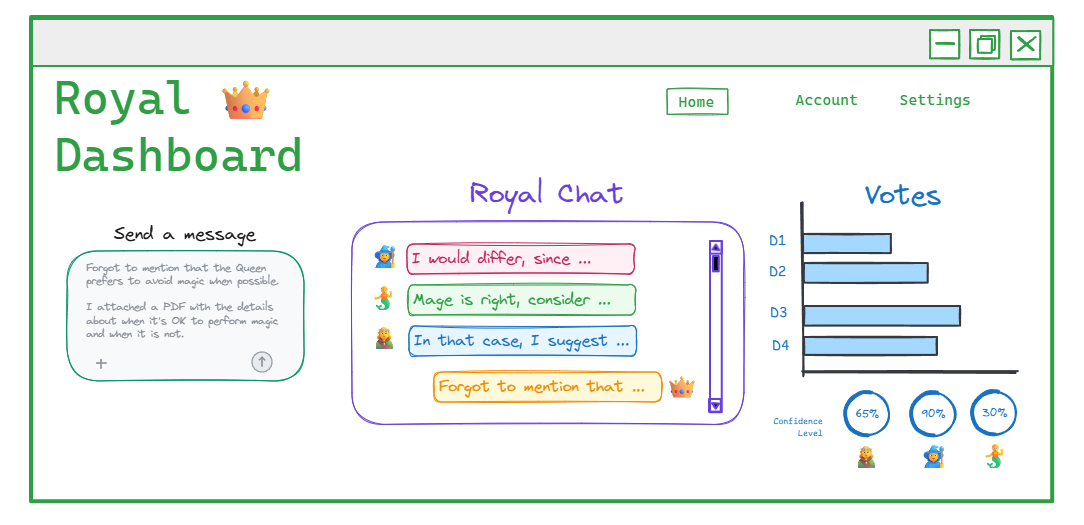

The core of Roundwise is the Dashboard, the user interface connecting you to the entire system. It is the bridge between you (the King) and the Round Table, and is the support part of the decision-making process.

Here is where the King can follow the discussion, analyze conversation traces, interact with agents, and review results.

Particularly, the Dashboard allows you to:

- Interact with the agents through the chat, sending and receiving messages transparently.

- Access the full conversation history, including metadata and reasoning traces.

- Visualize conversation graphs grouped by topics or domains.

- Monitor performance metrics, confidence levels, and vote distributions from each agent.

- Track the evolution of proposed solutions and summaries.

- Manage projects, sessions, and configuration options.

The dashboard brings transparency and control to the user, making AI collaboration auditable and interpretable.

Putting It All Together

Roundwise sets up a structured environment where multiple AI agents collaborate under human supervision to help tackle complex problems.

A team of experts at your hands. Structured, multi-perspective reasoning. Transparency and control.

Real World Example

Suppose a tech startup wants to decide whether to migrate from local servers to the cloud.

Here’s how Roundwise operates:

- The CEO describes the problem: “Should we move our infrastructure to the cloud?”

- The Gatekeeper clarifies questions about company size, security needs, and cost constraints.

- It proposes three expert agents:

- FinOps Agent – specialized in financial trade-offs and cost projections.

- DevOps Agent – expert in deployment, scalability, and performance.

- Data Governance Agent – focused on compliance, privacy, and data control.

- The user approves the setup.

- The Round Table begins its first discussion round. Each agent presents arguments based on its expertise.

- The Rules Engine coordinates multiple rounds of discussion, rebuttals, and voting.

- The Notary Agent summarizes the consensus: a structured recommendation balancing cost, scalability, and risk.

- The user reads the report in the Dashboard, reviews confidence metrics, and makes an informed decision.

Next Steps

I. Perform research on key areas to inform design and implementation.

The implementation of this project requires research in several areas. Below is a list of the proposed topics to investigate. Insights in any of these will contribute to the realization of the project.

Multi-agent coordination and decision-making

Study of architectures and algorithms that enable multiple autonomous agents to cooperate, negotiate, or compete to reach efficient collective outcomes under uncertainty.

Interface design for collaborative reasoning

Exploration of interaction paradigms that support transparent, structured reasoning among human and AI participants.

Semantic requirements mapping

Development of methods to extract, structure, and align user-stated problems or goals (through Natural Language Interaction) with relevant ontologies or computational models.

Conversation-stem tracing & provenance graphs

Techniques to model and visualize the logical flow of a discussion, tracking claims, evidence, and dependencies over time. Uses graph-based representations for reasoning traceability and knowledge provenance.

Turn orchestration in conversational systems

Research on coordination mechanisms that govern when and how multiple conversational agents or modules contribute to dialogue. Focus on turn-taking policies, dialogue management, and synchronization for coherent multi-party interaction.

Incentives & aggregation (game theory, mechanism design, social choice)

Design of mechanisms that align agent incentives and fairly aggregate preferences or beliefs in group decision processes.

Topic classification & taxonomy design

Construction of adaptive classification frameworks and semantic taxonomies that organize discussions, arguments, or research domains. Combines NLP, clustering, and ontology learning.

Argumentation theory & MCDA

Integration of formal argumentation frameworks with multi-criteria decision analysis to evaluate competing alternatives through structured reasoning, evidence weighting, and preference modeling.

Hybrid human–machine decision systems & collective intelligence

Study of systems combining human judgment and machine intelligence to improve group decision quality. Explores coordination models, trust calibration, and emergent collective behavior in mixed teams.

Evaluation metrics for multi-agent reasoning systems

Development of quantitative and qualitative metrics to assess the performance, coherence, consistency, and reproducibility of multi-agent collaborative reasoning processes.

Transparency, auditability, and accountability in AI systems

Research on methods to ensure that multi-agent AI systems provide interpretable, auditable decision-making processes, including provenance tracking, explanation generation, and governance frameworks.

II. Design the system architecture and components.

III. Develop a minimal viable prototype (MVP) to validate core functionalities.

IV. Test with real-world scenarios and gather user feedback.

V. Iterate and refine based on insights and performance metrics.

Rodrigo Cortés © 2025 all rights reserved. Last updated: October 2025